I was recently given a PC by a former colleague whose child was using it. They upgraded, so getting rid of some old computer is easy if someone is willing to take it, make sure that the data is erased, and dispose of it.

Thing is, this was an OK PC. Nice case, MSI motherboard, DDR3 RAM, Intel i5-3570K CPU, 250GB SSD, and a 1060 6GB Nvidia card. Gee, it would be a shame to let this go to waste. I got quickly to reinstalling Windows 10 on the system, which also has a PCIe x1 wireless card, and installed Steam. Okay, that was easy. Download some games and see what happens…

Not great, considering the “gaming” PC I have is an i7-9700F with a 4060 16GB, but it’s passable in a pinch and playable with lower graphics settings. This is especially handy when your gaming PC keeps inexplicably crashing during games, most notably and frustratingly Cyberpunk 2077.

Now I know that Cyberpunk had it’s fair share of issues when it launched, and it still has them in mid-2025, but crashing isn’t one of them. I’ve played many hours of this game on a 10th gen Intel with a mobile 2070 and it’s been flawless, and like many tech people we’re far more hesitant to reinstall operating systems on computers we use than for others.

Windows 10 is also nearly at the end of it’s support, so I figured that trying out Ubuntu linux with Nvidia drivers and Steam’s emulation experience with games made for Windows would be easy to do on the backup PC. How hard could it be? Not too, but it wasn’t easy either.

I grabbed another SATA 250GB SSD and plugged it in, removing the SATA cable from the Windows drive first, then booted to the Ubuntu 24.04 LTS installer and got to work. Installation took longer than I expected, but given that this CPU is over 10 years old I was patient. On rebooting things looked good. I installed Steam from the store, then got logged in and started the 55GB download.

I was disappointed when I clicked Play that it synced the cloud save, then seemed to start, but went immediately back to Play. No messages, no windows, just a loop. Great! How unexpected. Linux strikes again! We go way back, so 30 years of disappointment isn’t misplaced.

Okay, so now what? A guide that gave a suggestion to put a few key startup parameters in place to prevent the CDPR launcher from starting, which it normally does. This is a good step, and the flags given were useful elsewhere also:

--intro-skip -skipStartScreen --launcher-skip

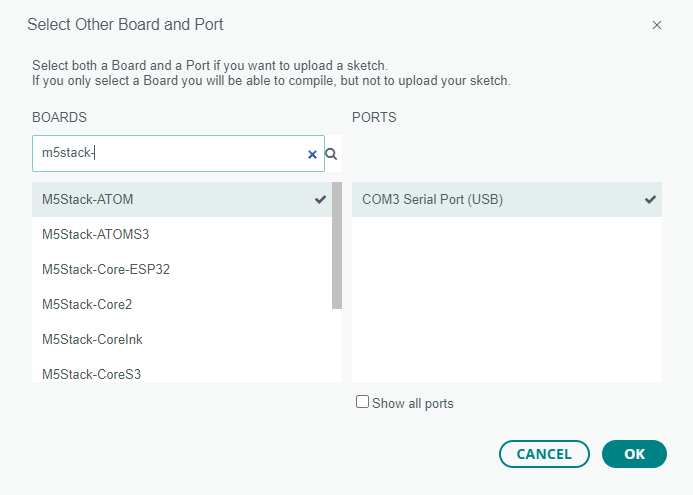

After doing this, well, nothing changed, so I dug deeper. On that same thread a poster said that they used those commands with a specific version of Proton, Steam’s emulation framework, so I started changing that under the game’s Properties. I finally found that Proton

version 9.0-4 would “launch” but didn’t work, however it didn’t loop. This was progress, because at least the game was theoretically running. Clicking Stop exited it, though nothing but the button visually changed.

I’d seen an error message when loading Steam that I only had the 64-bit drivers from Nvidia installed, and it recommended also installing the 32-bit ones as well. I tried this, using the instructions, but only made things even worse. That, and I likely messed up the proprietary drives that Ubuntu had previously installed. Frustrated, I gave up for the day but vowed to think about it and try again.

It seemed like the installation process went far too fast when I had “installed” the Nvidia drivers. It’s likely that I just installed some libraries, not the drivers themselves, so I went about doing that from the command line:

sudo ubuntu-drivers install nvidia:570

This took much longer than previously, and looked like it was doing something. It pauses for quite a long time at 95%, but finished convincingly with some patience in hand. That done, a quick reboot now showed my desktop once again in the proper 1080p resolution, so that was one step closer.

I checked the properties of the game once more, verified the version, and clicked Play. Once again it seemed to start, then something popped up but flashed away, then for the first time the screen went black. I waited, then waited more. 10 seconds became 20, and I did an ALT+TAB to see if things were still running. I switched back to the desktop, then returned to the game. The screen was still blank, and I paused when suddenly the intro screen appeared.

Okay, I thought, this was a step forward. Things rolled right in as though it was meant to work. The main menu loaded, and after selecting Continue on my most recent save, I waited for it to load. This also paused for quite a long time before starting the loading process, but given some recent experience with the texture loading times and pre-rendering that happens how this wasn’t a surprise.

I loaded into Kabuki, and looked around. The settings were familiar; slow and low, but pretty good. The equal of Windows, I thought, after a few minutes of jumping and dashing around. I quickly set a frame limit at 30FPS, just to be consistent, and started playing my post-ending character.

One quirk that went away quickly was a handful of cars driving by that were just the driver, a frame, and no textures over the body itself. I captures a screenshot of this, but seconds later all cars then subsequently were properly dressed in their panels.

30 minutes of gaming, crossing the city up to the Arasaka Waterfront yielded only an impressive result. The menus ran well, and disassembling components was far quicker than it had been in Windows. So far, with only two real changes to the configuration of Steam and the game I am very impressed.

I then installed Crysis 2 Remastered, an all-time favorite, to see how well the CRYENGINE works in Proton. As it happens, very well it seems. The game launched without any modifications, and the introduction ran fine. Only when doing the visor demo did the game crash, but it launched back up and I was able to play another 10 minutes without issue at a solid 60FPS.

Would I recommend doing this? Yes. If you have a gaming PC that you use only for games, try it with another drive and see how it works. Dual-boot is totally an option, and not a terrible one now that SSDs are ubiquitous and OS loading times are very short. Especially try this if you have a spare computer capable of doing it and in danger of falling out of support from Microsoft.